Balancing Automation with Oversight in Remediation Workflows

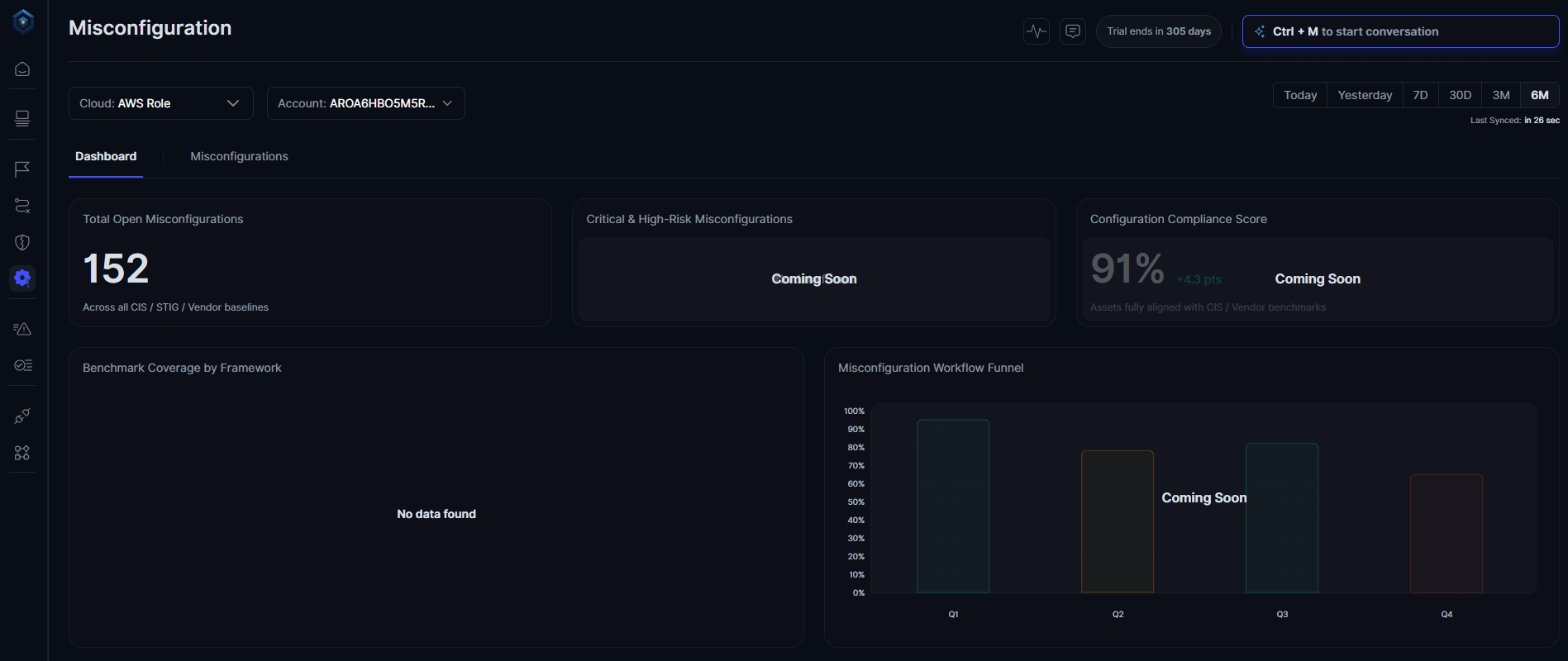

Secure.com's Digital Security Teammate handles the repetitive 70% (triage, enrichment, and routine remediation) so L1 and L2 analysts can focus on decisions that actually need a human.

Secure.com's Digital Security Teammate handles the repetitive 70% (triage, enrichment, and routine remediation) so L1 and L2 analysts can focus on decisions that actually need a human.

71% of SOC analysts say they're burned out — and it's not because the job is hard. It's because most of their day goes to tasks that a machine could handle. Automation can fix that, but only if the right human checks stay in place.

Every shift, L1 and L2 analysts face the same grind: pull the alert, enrich with context, cross-reference logs, open a ticket, and repeat. Most of that work never touches a real threat.

64% of SOC analysts say manual work takes up more than half of their time. More than half of those tasks (enrichment, triage, ticket creation, basic containment) follow a predictable, repeatable pattern. That's exactly what automation was built for.

L1 analysts spend the bulk of their time sorting through noise. Most alerts are false positives — up to 83% in typical SOC environments (industry research). Chasing them leaves little time for the ones that actually matter.

L2 analysts aren't doing much better. They handle escalations, but most of what gets sent up the chain didn't need a human to look at it in the first place. Poor triage at L1 creates a bottleneck that breaks the whole workflow.

The core issue isn't volume. It's that repetitive, low-judgment tasks are eating into the time analysts need for real investigation work. Alert fatigue sets in fast, and missed threats follow.

Automation works best when the task is well-defined, high-frequency, and low-risk. It breaks down fast when the situation is ambiguous or the stakes are high.

Tasks that are safe to automate:

Tasks that still need a human:

The line between these two categories should be documented, tested, and reviewed — not assumed. One misconfigured automation on a production system can cause more damage than the threat it was meant to stop.

Automated security investigations can cut manual investigation workload by up to 70%, with MTTR dropping 45-55% as a result (based on Secure.com customer deployments). That's real time freed up for work that requires judgment.

"Human oversight" doesn't mean slowing everything down for approvals. It means building the right checkpoints in the right places.

For routine actions (closing a false positive, enriching an alert, sending a notification) security automation runs without interruption. The analyst reviews a summary, not raw data. That shift alone cuts hours off the average investigation.

For high-impact actions, the system pauses and asks for approval before executing. An EC2 instance with unrestricted SSH gets flagged, the remediation workflow fires, but a human confirms before the port closes on a critical system. That approval gate is what makes the difference between safe automation and reckless automation.

Four things make this model trustworthy:

Organizations that use security AI with structured oversight save an average of $2.2 million per breach compared to those without it (IBM Cost of a Data Breach Report, 2023). The governance isn't overhead, and it's where the value comes from.

Teams that get this right track a small set of key metrics consistently:

Analyst feedback matters just as much as the numbers. If the team is building workarounds (auto-closing alerts without review, ignoring escalations) the automation isn't working. That's a signal to retune, not push harder.

The other risk: automating a broken process at scale. If the underlying triage logic is flawed, automation doesn't fix it but it amplifies the mistakes faster. Fix the process before you automate it.

Start narrow, prove the results, then expand. Low-risk, high-volume tasks first. Build trust in the tooling before touching anything that involves containment or production systems. That's not slow, that's how you avoid the outage that kills the whole program.

Secure.com augments L1 and L2 analysts by handling repetitive work, freeing them to focus on threats that require human judgment and strategic thinking.

L1 automation focuses on alert triage — filtering false positives, enriching alerts, and routing real threats. L2 automation supports deeper investigation: correlating events across systems, pulling context automatically, and surfacing pre-built case summaries. Both tiers benefit, but the type of work being automated is different.

Set confidence thresholds for every automated action. High-confidence, low-impact actions run automatically. Lower-confidence or high-impact actions require human approval before executing. Pair that with immutable audit logs and rollback capability, and the system stays accountable.

Threat hunting, strategic security decisions, and any action on production or business-critical systems should always have a human in the loop. Automation is good at pattern recognition and repetitive execution — it's not built to handle novel threats or situations that require contextual judgment.

Teams that start with well-scoped, low-risk use cases typically see measurable improvements in MTTR and analyst workload within the first few weeks. Broader results — reduced burnout, better SLA adherence, fewer missed threats — show up over the first one to three months as the system learns the environment and playbooks mature.

Your security stack isn't failing because you have too few tools; it's failing because too many of them are working against each other.

SOC 1, SOC 2, and SOC 3 are not levels — they're three separate audit reports that serve completely different purposes. Here's how to tell them apart.

MDR and SOC both protect your business from cyber threats — but they work very differently. Here's how to pick the right one.