Have You Ever Looked at Your Tech Stack and Wondered What Half of It Does?

Your security stack isn't failing because you have too few tools; it's failing because too many of them are working against each other.

Your security stack isn't failing because you have too few tools; it's failing because too many of them are working against each other.

A bigger security stack doesn't mean better security. When your team spends more time managing tools than stopping threats, the stack itself becomes the problem. This post breaks down why that happens and what a leaner, smarter setup actually looks like.

A SOC analyst once said, "I spend all day checking alerts that never go anywhere, then come back to hundreds more. It never ends." That wasn't a one-off complaint. That's what daily life looks like in most security teams right now. The tools are there. The budget got spent. But the work still falls on the same exhausted people.

The problem isn't a lack of tools. It's that the tools don't work together, and your analysts end up being the glue.

Every new threat over the past decade came with a new product to buy.

Ransomware? New tool.

Cloud misconfigurations? Another tool.

Identity risks? Add one more.

Over time, the stack grew into something nobody fully understands anymore.

The logic made sense at the time: cover every threat category with a dedicated solution. But nobody planned for what happens when 15 tools all fire alerts at the same time with no shared context.

Security teams now spend more time switching between platforms than actually investigating threats. According to IBM's Cost of a Data Breach Report, organizations with high tool complexity had breach costs nearly 20% higher than those with more streamlined operations. Additionally, industry research shows that 95% of security leaders report running overlapping tools, with most organizations using less than half of the features they pay for.

Licensing fees are easy to see on a spreadsheet. Analyst hours spent copy-pasting data between tools are not.

When your team becomes the integration layer (manually pulling logs from one system, correlating them in another, then writing up findings in a third) that's not a workflow. That's a daily tax on your most important resource: human attention.

95% of security leaders report running overlapping tools, and most organizations use less than half of the features they actually pay for. You're not getting more coverage. You're getting more noise.

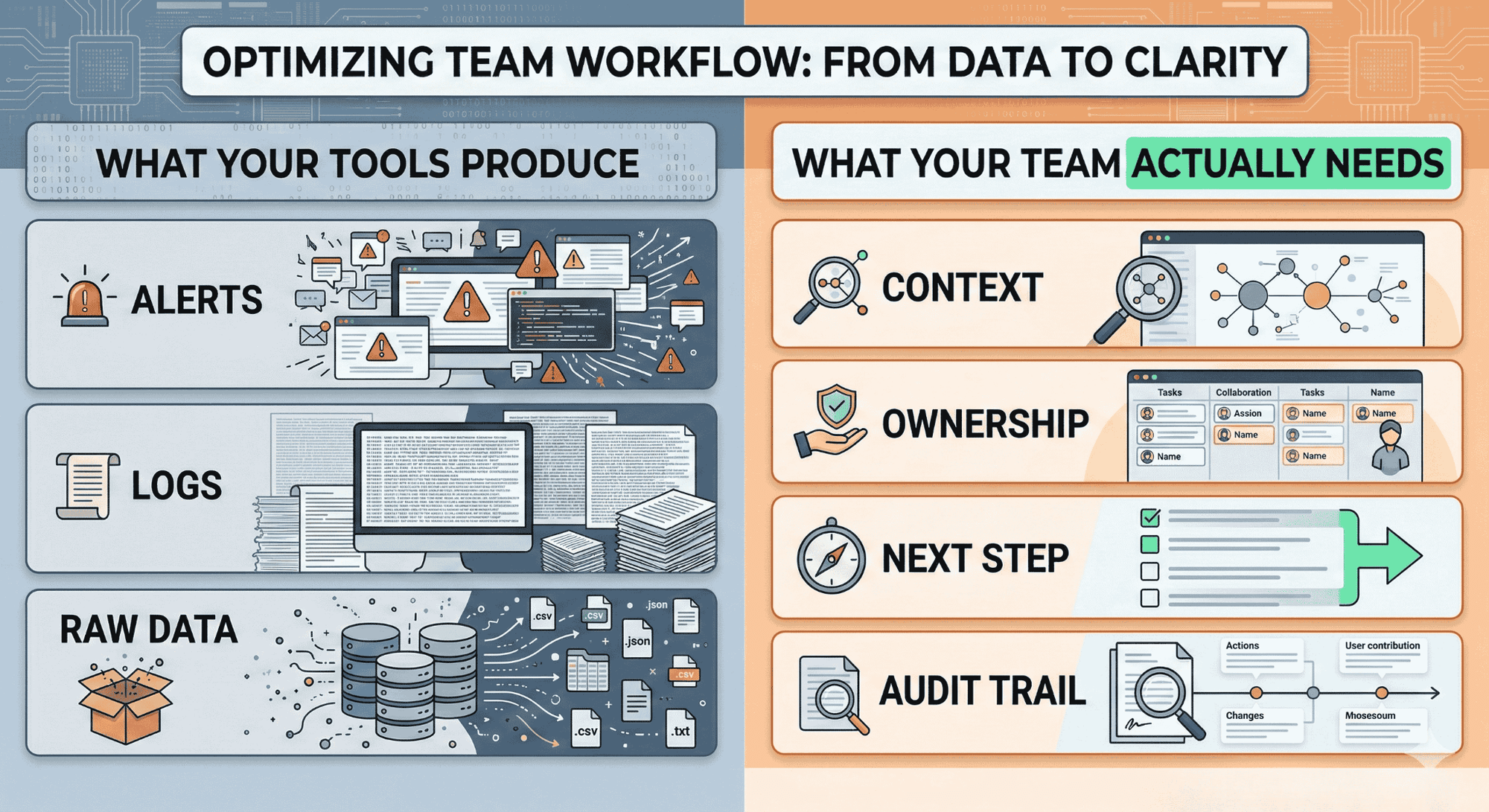

Your tools generate signals. But signals aren't investigations. Somebody still has to figure out what happened, who owns the asset, how bad it is, and what to do next. That part doesn't happen automatically and that's where most of the time goes.

A SIEM fires an alert. An EDR flags a process. A cloud scanner finds a misconfiguration. None of those tools tell you if the three things are connected, which asset is at risk, or who needs to act.

That gap (between a signal and a decision-ready investigation) is where analyst hours disappear. Studies show that about 67% of daily alerts go uninvestigated because teams simply can't keep up with the volume.

In fact, typical SOC environments see 11,000+ alerts per day, with 70% of alerts being ignored due to overwhelming volume and lack of context.

Siloed tools don't just slow teams down. They create blind spots. When your SIEM doesn't talk to your identity platform and neither connects to your cloud posture tool, attackers move through the seams between them.

Fragmentation doesn't just hurt efficiency. It creates the exact visibility gaps that breaches live in. Attackers exploit the seams between siloed tools - when your SIEM doesn't correlate with your identity platform and neither connects to your cloud security posture, lateral movement and privilege escalation go undetected. This is why attack path analysis across your entire environment is critical, not just point-in-time vulnerability scanning.

Picking the "best" tool in every category sounds smart. But best-of-breed only works if everything integrates cleanly, which it rarely does. You end up with a stack that's excellent in theory and exhausting in practice.

The result is longer detection times, harder-to-trace incidents, and SOC analysts who feel like they're fighting their own tooling instead of the threat.

A well-functioning security stack isn't the biggest one. It's the one where work actually gets finished (investigated, responded to, documented, and closed) without the team burning out to make it happen.

Coverage means you own a tool that watches a threat category. Throughput means the threat was actually handled; context gathered, decision made, action taken, evidence logged.

Those are two very different things. Most stacks are optimized for coverage. The ones that actually protect organizations are optimized for throughput.

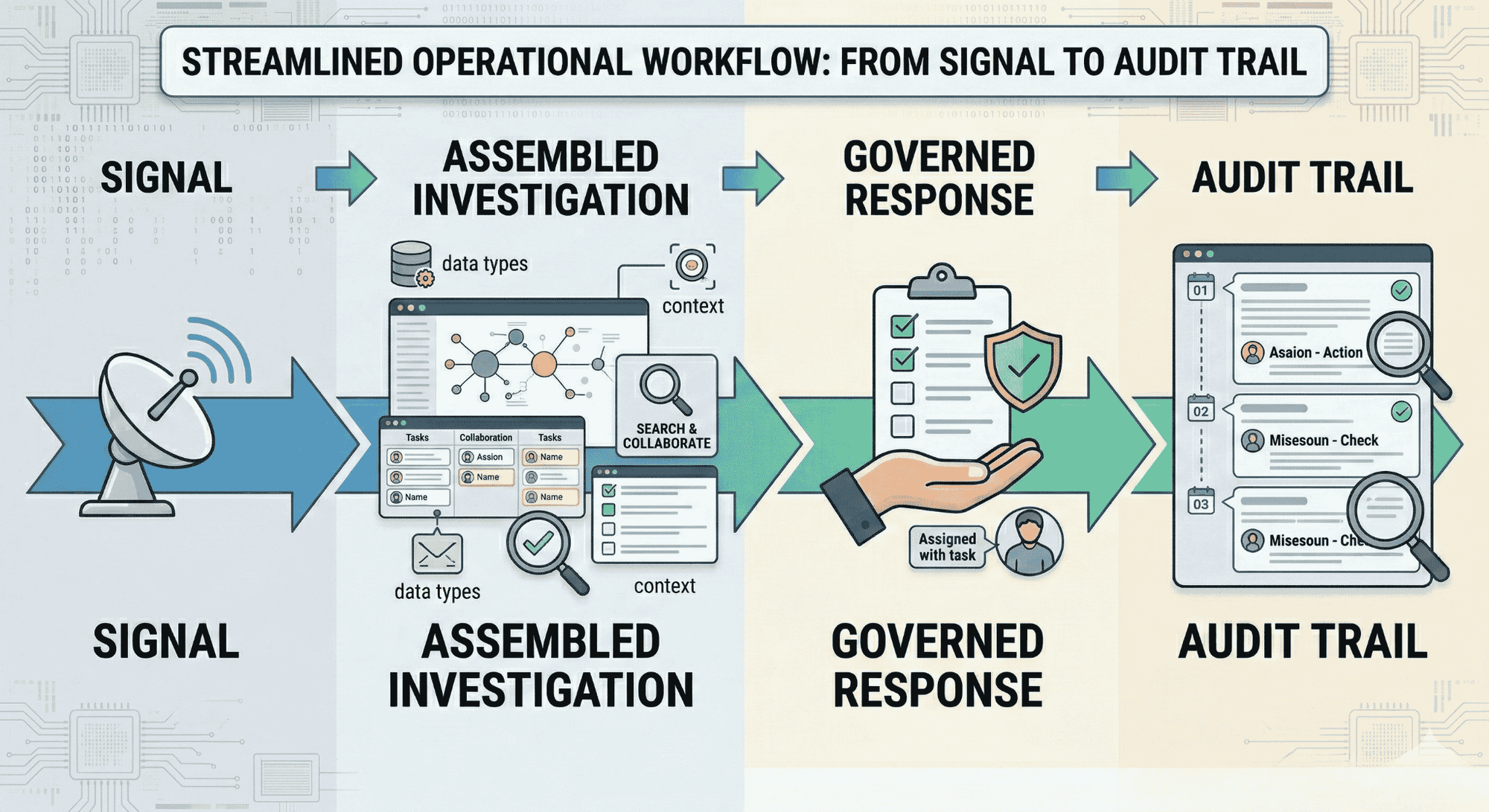

Speed without controls creates its own risk. When response actions run without approvals, without reversibility, and without documentation, you trade one problem for another.

A stack that works doesn't just detect and respond. It does it with policy-bound workflows that create a defensible record of every action taken. That matters for regulators, for post-incident reviews, and for understanding what actually happened.

Analysts should be making decisions, not chasing context across 12 platforms.

The difference between an analyst who's burned out and one who's effective isn't talent; it's what the system asks them to do manually. When investigations assemble themselves, when ownership routes automatically, and when response runs through governed workflows, the human's job becomes judgment. That's where they should be spending their time.

The security industry is very good at selling urgency. Every product promises coverage you don't have. Before the next renewal or new purchase, slow down and pressure-test what's already there.

If your team needs to manually pull data from this tool and combine it with three others to get one answer, it's adding work, not removing it. A useful tool produces output your team can act on directly.

Any tool that takes action in your environment needs to leave a clean record. If you can't answer "what ran, who approved it, and what changed," you can't defend it to a regulator or an exec team after an incident.

Adding a new alert source without adding context is just adding noise. A tool that integrates with what you already have (and makes existing signals more useful) is worth far more than one that runs in isolation.

Map the full workflow before you buy. If the tool detects something but remediation still requires three human handoffs, two other platforms, and a spreadsheet, you're not buying resolution. You're buying another starting point.

Most security platforms give you more tools to manage. Secure.com's Digital Security Teammates take the opposite approach. Instead of adding to the pile, they work alongside your existing stack and turns fragmented signals into investigation-ready cases with context, recommended actions, and complete audit trails, while keeping humans in control of final decisions.

Secure.com connects to your existing SIEM, EDR, identity platforms, and cloud tools through 200+ out-of-the-box integrations. It pulls signals from all of them, builds the investigation narrative automatically, and routes the right decision to the right person with full context already assembled. Your analysts stop chasing logs across platforms. They start making decisions on work that's already ready for them.

Speed without control creates new risk. Secure.com doesn't just automate; it automates within policy-bound workflows that include approvals, reversibility, and a complete action trail for everything that runs.

That means every response action is documented, every change is traceable, and your team can defend exactly what happened and why... to regulators, to leadership, or in a post-incident review.

Secure.com offers role-specific Digital Teammates built for different parts of the security operation:

Each teammate handles the work in its domain, not by replacing your team, but by making every analyst more effective than they could be alone.

Teams using Secure.com's Digital Security Teammates shift from reactive alert-chasing to proactive, outcome-driven security operations.

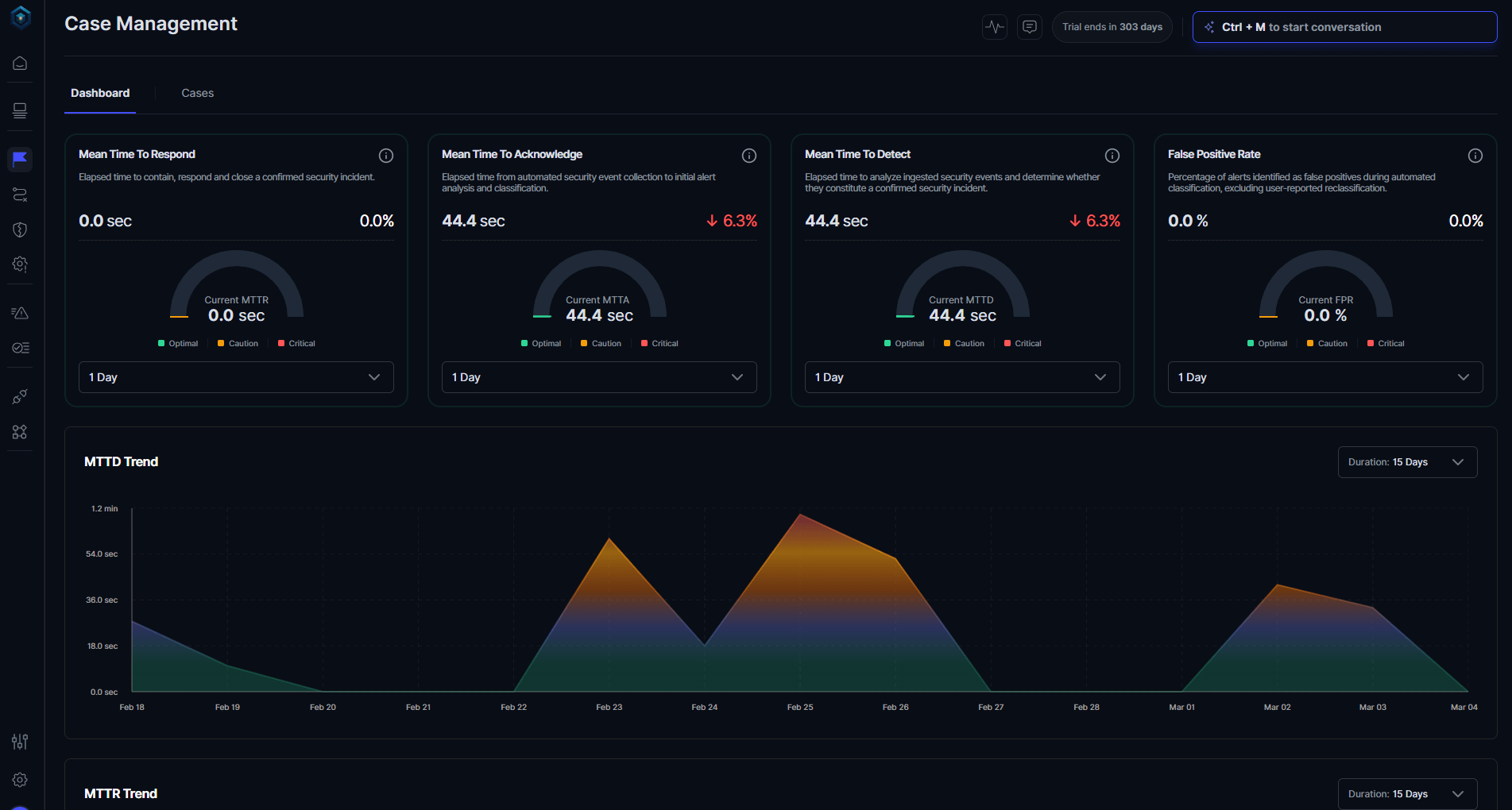

The difference shows up in measurable outcomes: 30-40% faster detection (MTTD), 45-55% faster response (MTTR), and 70% reduction in manual triage workload.

If your current stack is good at detecting but slow at resolving, Secure.com is the execution layer that closes that gap.

The goal was never to own every tool in the category. The goal was to stop threats. If your stack is growing but your team is still drowning, the stack isn't doing its job.

The strongest security teams aren't the ones with the most tools. They're the ones who know exactly what each piece does, where it connects, and what happens when it runs.

If you can't answer about half your stack right now, that's not a procurement problem. That's an operations problem and fixing it is worth more than the next product renewal.

Start by asking how many tools your analysts touch in a single investigation. If the answer is more than three or four, and none of them talk to each other automatically, you have a fragmentation problem. Also look at alert-to-investigation ratios, if most alerts never get reviewed, you have more coverage than your team can operationalize.

Usually, yes. A tool that integrates well and produces investigation-ready output is more valuable than a specialized one that adds another manual handoff. The features you never use because they don't connect to anything aren't features — they're line items.

Coverage means a tool watches a threat category. Throughput means threats in that category actually get investigated, responded to, and closed. Most stacks have plenty of coverage. Very few are built for throughput.

Tie it to time and cost. Show how many analyst hours go toward manual coordination between tools. Show the alert-to-close rate. Show the overlap between what you pay for and what your team actually uses. The business case for consolidation writes itself when you frame it as buying back analyst capacity, not cutting features.

Secure.com's Digital Security Teammate handles the repetitive 70% (triage, enrichment, and routine remediation) so L1 and L2 analysts can focus on decisions that actually need a human.

SOC 1, SOC 2, and SOC 3 are not levels — they're three separate audit reports that serve completely different purposes. Here's how to tell them apart.

MDR and SOC both protect your business from cyber threats — but they work very differently. Here's how to pick the right one.