Key Takeaways

- A good metrics program is reviewed quarterly and tied to what the business is actually trying to protect, not what is easy to count.

- Tracking alert volume is not the same as measuring risk. Most dashboards show activity, not outcomes.

- MTTD, MTTR, and false positive rate are the three metrics every SOC should baseline before adding anything else.

- Risk scoring needs business context. Asset criticality matters more than CVSS scores alone.

- The boardroom speaks in dollars and downtime. Translate every security metric into a business impact before presenting it to executives.

Introduction

A SOC analyst once put it plainly: “We are measured on how many alerts we close, not how much risk we reduce. It is all security theater.”

That one line describes the issue most security teams are living. The dashboards look busy. The numbers keep climbing. But nobody can tell the board whether the company is actually safer than it was last quarter.

Most SOC Metrics Are Measuring the Wrong Things

Security teams are not tracking bad metrics by accident. The tools they use are built to count activity, and counting activity is easy. Tracking actual risk reduction is harder.

58% of CISOs admit they struggle to communicate technical data to senior leadership in a language executives can understand. That is not a personality problem. It is a structural one.

- The SOC speaks in alerts, IOCs, and ticket queues.

- The boardroom speaks in revenue, downtime, and liability.

41% of security leaders cannot correlate their work to risk mitigation, even though 82% say incident reduction is the top metric boards want to see when evaluating security ROI. That gap is costing teams credibility and funding.

The Alert Volume Trap

Closing 500 alerts a day sounds like a productive team. It is not, unless those alerts were worth investigating in the first place.

Best-in-class security teams maintain a detection accuracy rate of 85% or higher. Teams hovering below 70% are burning analyst time, delaying response, and increasing the risk of missing something genuinely critical. Alert volume without alert quality is noise. And noise burns out analysts faster than actual incidents do.

Activity vs. Outcome: The Measurement Gap

Completing 100% of security training is an activity metric. It tells you what your team did, not what changed because of it.

The most common mistake in security measurement is confusing activity with progress. A high level of activity can coexist with very high risk, because activity does not measure real behavior or real outcomes.

Security teams need to stop reporting what they did and start reporting what changed as a result.

The SOC Metrics That Actually Reduce Risk

Not every metric is a waste of time. Some of them genuinely show whether a SOC is getting better or just staying busy. These are the ones worth tracking.

Mean Time to Detect (MTTD)

MTTD measures how long it takes your team to spot a threat after it enters your environment. Every minute a threat goes undetected, the potential damage grows.

Automated detection and AI-assisted triage can cut MTTD by 30–40%. Secure.com’s SOC Teammate achieves 70% faster detection through automated signal normalization, threat intelligence enrichment, and MITRE ATT&CK correlation. For a team still running manual alert review, the gap is significant. Target: under 24 hours for most organizations, under one hour for high-risk environments.

Mean Time to Respond (MTTR)

MTTR tracks how long it takes from detection to containment or resolution. Detecting a threat quickly is only half the job.

Teams using automated response playbooks report 45–55% faster MTTR compared to manual workflows. Secure.com’s SOC Teammate achieves 50% faster response through pre-approved containment playbooks with human-in-the-loop governance for high-impact actions. That speed difference is the difference between a contained incident and a full breach.

Target: under one hour for critical threats.

False Positive Rate

A high false positive rate means analysts are spending most of their day chasing nothing. That leaves real threats buried in noise.

Research shows organizations with false positive rates above 55% face higher breach rates, because analysts start tuning out alerts entirely. The right target depends on your environment, but trending down over time is the only direction that matters.

Alert-to-Incident Ratio

This metric tells you what percentage of your alerts are turning into real, confirmed security incidents. A low ratio means your detection tools are noisy and poorly tuned. A high ratio means your team is working on things that actually matter.

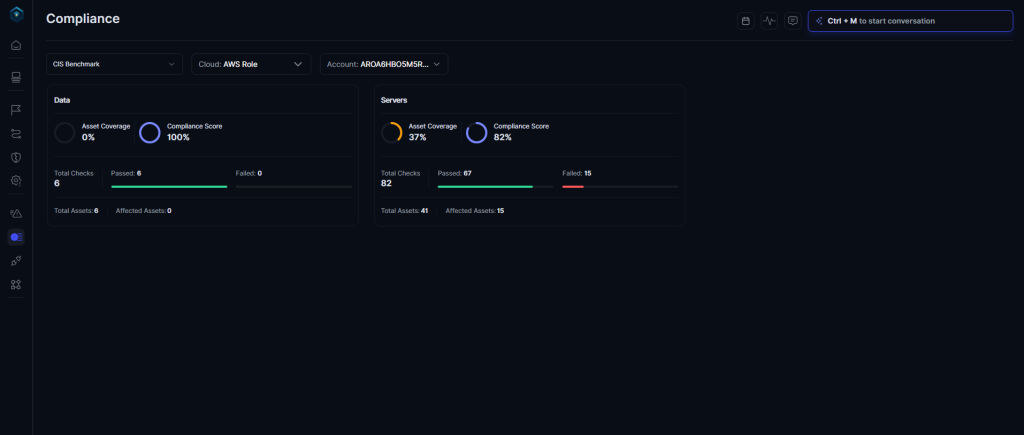

Asset Coverage

You cannot detect threats against systems you are not monitoring. Asset coverage shows whether your SOC has visibility across every important endpoint, cloud resource, and critical application. Gaps in coverage are where attackers hide.

Translating SOC Data Into Business Risk Language

This is where most security teams fall short. The metrics exist. The data is there. But nothing gets translated into language that makes a CFO or a board member stop and pay attention.

88% of boards already view cybersecurity as a business risk, not just an IT problem. The CISO’s job is to translate so they actually listen. Boards do not need to understand what a SIEM is. They need to understand what a breach would cost, how fast the team can respond, and whether the trend is improving.

Stop Reporting What You Blocked. Report What You Protected.

Every security metric can be reframed around a business outcome.

- “We reduced MTTD by 20%” becomes “By catching threats 20% faster, we reduced potential financial exposure in a breach scenario by an estimated $2.5 million.”

- “Our false positive rate dropped from 60% to 35%” becomes “Our analysts are now spending 25% more time on real threats instead of chasing false alarms.”

The most effective CISOs translate metrics into business impact. Instead of reporting technical results, they say things like: ‘Our new endpoint strategy reduced potential downtime by 40%.’ That is what resonates in a boardroom.

Risk Scoring Based on Business Context

Not every vulnerability is equally dangerous. A flaw in a test environment is very different from a flaw in the payment processing system.

Risk scoring needs to account for asset criticality, not just technical severity. A critical asset with a medium-severity vulnerability is often more urgent than a low-priority system with a high CVSS score. Business context is what makes scoring useful.

How to Structure Executive Reporting

Boards do not need 47 metrics. They need three clear answers:

- What are the biggest risks the business faces right now?

- What is being done about them?

- Is the posture improving or getting worse?

Measuring and communicating risk remains one of the top challenges for security leaders. CISOs describe themselves as “always translators,” and the ability to take technical data and articulate the risk to the business is considered more of an art than a science.

The teams that get better at that translation earn more trust and more budget.

Building a Metrics Program That Grows With Your Business

A good metrics program does not stay the same year after year. The business changes. The threat landscape changes. The metrics need to keep up.

Start With a Baseline

Pick five metrics and measure them consistently for 90 days before adding more. MTTD, MTTR, false positive rate, asset coverage, and incident volume are a solid starting set. You cannot improve what you have not measured yet.

Use Automation to Improve What You Track

Manual reporting is slow and inaccurate. Modern platforms log every triage decision, investigation step, and response action automatically. That gives leadership a reliable, live view of security performance without analysts spending hours compiling spreadsheets.

At Secure.com, the SOC Teammate handles the bulk of initial case handling, presenting ready-to-review summaries instead of raw alert queues. That means faster metrics, cleaner data, and analysts focused on what actually needs human judgment.

Review Metrics Every Quarter

Security priorities shift. Business priorities shift. A quarterly review keeps your measurement program aligned to what the company is actually trying to protect. If a metric is no longer driving a decision, remove it.

How Secure.com Helps You Measure What Actually Matters

Most teams do not have a metrics problem. They have a visibility problem. Secure.com’s SOC Teammate gives analysts the context and automation they need to track outcomes, not just activity.

- Automated triage handles first-pass alert investigation so analysts spend time on confirmed threats, not noise.

- Built-in MTTD and MTTR tracking shows exactly where your team is fast and where it is losing time.

- Case Management ties every alert to a structured investigation, giving leadership clean data without manual reporting.

- Benchmark Compliance measures your SOC performance against industry standards and surfaces gaps before auditors do.

- Digital Security Teammate delivers ready-to-review case summaries so decisions get made faster and risk gets reported in plain language.

Conclusion

Security theater happens when teams measure effort and call it safety. Closing alerts is not the same as reducing risk. Filing tickets is not the same as protecting the business.

The shift from SOC metrics to business risk is not a reporting upgrade. It is a maturity shift. It means measuring outcomes, not activity. It means speaking the language of the board, not just the language of the SIEM. And it means building a metrics program that tells you whether the business is genuinely safer, not just whether the dashboard looks full.

Secure.com’s SOC Teammate is built around that exact shift. Less manual work. Cleaner data. Faster response. And metrics that actually mean something to the people making decisions.