Key Takeaways

- Alert volume is not the core problem. Alerts without context are.

- Enriching alerts with asset, user, and threat intel data separates real signals from noise.

- Correlation groups related events across tools into a single case, cutting analyst workload fast.

- Noise reduction via enrichment is not the same as suppression. It makes alerts smarter, not invisible.

- A repeatable workflow with regular tuning is what keeps noise low over time.

Introduction

A security team at a mid-sized company was processing over 4,000 alerts a day. By the time they finished sorting through the noise, 67% of those alerts had gone uninvestigated. Not because the team was careless. Because there was simply no way to keep up. That is not a people problem. That is a workflow problem.

Most SOC teams spend around 80% of their time on triage instead of actual investigation. The goal is not to see fewer alerts. The goal is to stop treating every alert like an emergency and start building cases worth acting on.

Why Your Alert Volume Is Not the Real Problem

The average SOC receives somewhere between 1,000 and 4,000 alerts per day. The number itself is not what breaks teams. What breaks teams is that most of those alerts arrive with no context. No asset information. No user history. No connection to the three other alerts that fired five minutes earlier from a completely different tool.

A raw alert is just a data point. Without context, it is noise.

What Makes an Alert “Noisy”

An alert becomes noise when it shows up without enough information to act on. A few of the most common patterns:

- SIEM, EDR, and firewall tools fire separately on the same event, creating three or four tickets for what is actually one thing.

- The alert does not tell you whether the affected asset is a critical production server or a decommissioned test machine.

- There is no user behavior baseline, so you have no way to know if the activity is unusual or completely normal for that person.

- Related events happening within the same time window stay disconnected because tools do not talk to each other.

The Hidden Cost of Ignoring Noise

More than 50% of daily SOC alerts are false positives, according to industry research. That number is not just a productivity stat. It is the reason analysts stop trusting the system. When everything fires at the same severity, nothing feels urgent. Critical threats get buried under low-value pings. And the team that should be investigating real attacks is spending their shift closing tickets that should never have been opened.

Context Is What Turns an Alert Into a Signal

Context means answering the questions an analyst would ask in the first thirty seconds:

- Who triggered this?

- On what system?

- Is that normal for this person?

- Is this IP associated with anything known?

- What else happened around the same time?

When those answers are already attached to the alert, the analyst is not starting from zero. They are reviewing a pre-assembled picture, not building one from scratch.

Types of Context That Actually Matter

Not all context is equal. These four types make the biggest difference:

- Asset criticality: Is this a domain controller or a developer laptop? The same alert means something very different depending on the answer.

- User behavior history: A login at 2 AM from a new country looks very different if that user has done it before versus if it has never happened.

- Threat intelligence: Does the IP or domain involved match anything in known threat feeds? A connection to a flagged ransomware campaign changes the entire investigation priority.

- Time and environment context: An after-hours login from an employee who has never worked nights is a different story than the same login at 9 AM.

How Enrichment Reduces False Positives Without Suppressing Alerts

There is an important difference between suppressing alerts and enriching them. Suppression hides alerts you do not want to see. Enrichment adds enough context so you can see clearly what is real and what is not.

Here is a real example of how that plays out:

An alert fires: “Failed login attempt. 5 retries. Account: [email protected].”

- Without context: Looks like a potential brute-force. The analyst spends 30 minutes investigating.

- With context: The IP is from Sarah’s home city. Her last ten logins came from the same IP. She resets her password every few months and always fails a few times before succeeding. This is not an attack. This is Tuesday. That context did not suppress the alert. It resolved it in seconds.

On the other side: the same alert fires for an account that has never logged in remotely before, from an IP tied to a known credential stuffing campaign. Context does not close it. Context escalates it immediately. That is what enrichment is supposed to do.

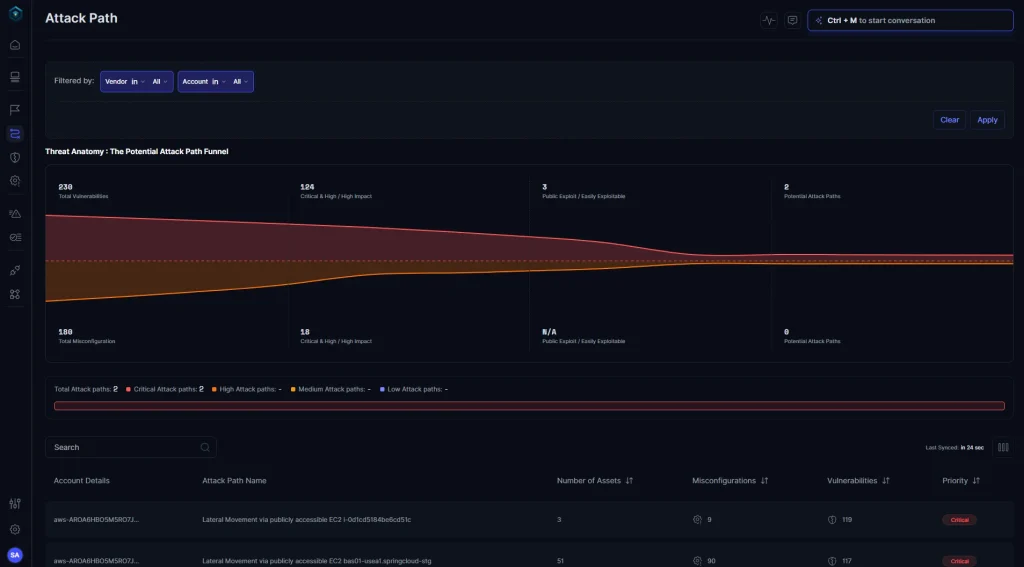

Correlation: How Individual Alerts Become One Real Case

Alert correlation is the process of connecting related events so that five alerts about the same incident become one case, not five separate tickets. Instead of each tool firing its own notification and leaving your team to piece it together, correlation does the connecting automatically.

Think of it this way: a firewall blocks an outbound connection. An EDR flags unusual process behavior on the same host. An identity tool shows a failed login from the same user five minutes earlier.

- Three tools.

- Three alerts.

- One attack chain.

Without correlation, your team investigates three separate events. With correlation, they see one story.

How Correlation Works Across Tools and Data Sources

For correlation to work, your security tools need to speak the same language. That is where data normalization comes in. When logs from your SIEM, EDR, firewall, and cloud tools are normalized into a shared schema, like the Open Cybersecurity Schema Framework (OCSF), the system can match events by user, host, time window, or behavior pattern.

Once normalized, correlation rules do the rest:

- Group events that involve the same user or asset within a defined time window.

- Identify sequences that match known attack patterns, such as MITRE ATT&CK techniques for lateral movement or credential access.

- Score the correlated group by combined risk, not individual alert severity.

What a Correlated Case Looks Like vs. A Raw Alert Queue

Before correlation, an analyst opens their queue and sees:

- 40 alerts from 6 different tools

- No clear connection between them

- Different severity labels, different formats, different timestamps

- No way to know which one to look at first

After correlation, that same situation becomes:

- 1 case: “Suspicious lateral movement attempt on host PROD-DB-01”

- Timeline: Three tools fired within 12 minutes of each other

- Impacted assets: One production database server, one admin account

- Suggested next step: Isolate host and review access logs

That is not a smaller pile. That is a different kind of work entirely.

Research shows manual alert investigation takes 30 to 45 minutes per alert in a traditional environment. A well-correlated case can bring that number down to under 3 minutes for AI-assisted investigations.

Turning This Into a Repeatable System

Context and correlation only do their job when they are built into the workflow. Doing this manually every once in a while gets you a good week. Building it as a system gets you lasting results.

The Steps to Build a Noise-Reduction Workflow

Step 1: Audit where your noise is coming from. Not all noise comes from the same place. Start by tracking which tools generate the highest volume of alerts, what percentage of those are false positives, and which alert types never turn into real investigations. That audit tells you exactly where to focus first.

Step 2: Enrich alerts automatically before they reach the analyst queue. Pulling context manually for each alert is the biggest time sink in any SOC. Build enrichment into your pipeline so that asset value, user history, and threat intel are already attached to each alert when the analyst sees it.

Step 3: Set correlation rules to group related events. Start with simple rules: same user, same host, same 15-minute window. Build from there. Over time, your rules can match behavioral patterns and known attack sequences rather than just raw data points.

Step 4: Use risk-based scoring so high-impact cases surface first. Severity alone is not enough. A medium-severity alert on a critical production server deserves more attention than a high-severity alert on an isolated test environment. Score cases based on asset value, user sensitivity, and real-world business impact.

Step 5: Review and tune regularly. What was noisy last quarter may be a real signal today. Alert rules need to evolve as your environment changes. Schedule monthly reviews of your top false positive sources and adjust accordingly.

How Secure.com Fits Into This

Secure.com automates enrichment, cross-tool correlation, and risk-based scoring out of the box. When an alert fires, the platform immediately pulls asset context, user behavior history, and threat intelligence from connected sources. Related events across your SIEM, EDR, identity tools, and cloud infrastructure get grouped automatically into a single, decision-ready case.

Analysts do not log into six different tools to piece together what happened. They open one case with a full timeline, the affected assets, the enrichment already applied, and a clear next step.

The result: 70% less manual triage work and incident response that is 45 to 55% faster, based on outcomes reported by organizations using Secure.com’s Digital Security Teammate for SOC operations.

Conclusion

Moving from a flood of alerts to clean, actionable cases is not a technology problem. It is a workflow design problem. Teams that add context and correlation stop chasing individual alerts and start doing actual security work.

The shift is straightforward in concept: enrich every alert before it reaches your analysts, group related events into one story, and score cases by real business risk rather than raw severity. Build that process into a repeatable system, tune it regularly, and your team will spend their time on investigations that matter instead of sorting through noise that does not.

Secure.com is built to run that process automatically, so your analysts can focus on the decisions that actually require a human.