TL;DR

Trust isn’t blind faith. It’s much-needed confidence built via transparency, control, and proven reliability. This is where Digital Security Teammates come to the rescue by solving this paradox through governed autonomy while maintaining human-in-the-loop oversight.

Introduction: The Trust Paradox in Security Automation

Most organizations are now spending on AI automation, yet most security teams won’t let it run without human approval. The reason: one misconfigured automation in production can cause more damage than the threat it’s meant to stop.

Security professionals face a paradox. They need AI to handle overwhelming alert volumes, SOCs receive thousands of alerts daily (industry average: 11,000+ per day), with up to 80% going uninvestigated due to resource constraints. But they fear giving AI execution authority.

Why Trust Matters in Security Automation

The Cost of Mistrust is Measurable

Every SOC team manages thousands of alerts every day. But the underlying issue is that most alerts are never investigated. Real threats hide in the noise while analysts drown in false positives.

The Cost of Mistakes is Severe

When automation goes wrong, it can disrupt your entire business. Decisions made behind closed doors create compliance headaches. Without clear audit trails, explaining to regulators or leadership what actually happened becomes nearly impossible.

The Human Toll is Real

Consider the human impact. Alert fatigue isn’t just a buzzword because it leads to genuine analyst burnout. Security analysts report significant burnout and alert fatigue. Add missed threats and inefficient triage workflows to the mix, and you’ve got a compounding problem that’s only getting worse.

Trust Bridges Capability and Deployment

Organizations invest in powerful automation tools but don’t trust them enough to act, creating a security leverage gap where defenders work harder but achieve less.

The Four Pillars of Digital Security Teammates (DSTs)

Pillar 1: Explainability

Digital Security Teammates explain clear reasoning for every recommendation that analysts can review instead of just flagging alerts.

Using Context-aware Explanations

- L1 analysts receive concise summaries focused on triage decisions

- L3 threat hunters get technical details about decision logic and data correlations

Explainability Prevents Blind Trust

- When analysts understand the “why” behind recommendations, they make informed decisions about when to follow guidance and when to override it.

Pillar 2: Auditability

Immutable audit logs capture every action, decision, and workflow execution with timestamped activity trails. This creates a complete chain of custody for compliance, forensics, and post-incident review.

Complete Visibility into Teammate Operations

Activity Monitors show what the teammate did, when, and based on what evidence. Leadership, auditors, and regulators can verify that automated actions follow approved processes.

Auditability Enables Accountability

When something goes wrong—or right—teams can trace exactly what happened and why. This supports continuous improvement and regulatory compliance.

Pillar 3: Reversibility

Any automated action can be reviewed, modified, or rolled back by human operators. This safety net prevents mistakes from becoming disasters.

Human-in-the-loop approval

Low-risk, monotonous tasks execute automatically. Medium-risk actions present recommendations for review. High-risk decisions require human approval.

Learn from near-misses

When Digital Security Teammates suggest an action that analysts modify or reject, the platform captures that feedback to improve future recommendations. Boundaries adjust based on the team’s actual preferences and risk tolerance.

Pillar 4: Governed Autonomy

Digital Security Teammates act autonomously for approved tasks and escalate when managing blockers or high-risk scenarios.

Customizable boundaries align with team culture

- SOC leaders tailor the teammate’s scope, authority, and behavior to align with operational workflows.

- Some teams grant broader autonomy; others start more conservatively.

Automatic escalation maintains safety

When the teammate encounters a situation outside its approved boundaries or lacks required access, it escalates to humans with full context about what it was trying to accomplish and why.

How Digital Security Teammates Build Trust Over Time

Trust develops through phases, especially when managing a broken system. Organizations crawl, walk, then run.

Phase 1: Detection & Recommendation (Crawl)

Analysts observe how the teammate thinks, validate accuracy, and identify patterns.

Example: Autonomous Investigation

- Alert triggers.

- Teammate gathers endpoint and network evidence.

- Enriches with threat intelligence.

- Presents a pre-written case summary.

- Analyst reviews reasoning.

- Decide next steps.

Goal: Establish baseline performance

Teams calibrate expectations and build confidence through observation.

Phase 2: Supervised Automation (Walk)

Introduce low-risk automated actions with mandatory approval gates. The teammate enriches alerts, correlates data across tools, and proposes specific remediation actions. Humans review and approve before execution.

Example: Case Management

Teammate removes duplicate alerts, applies appropriate playbooks, and routes cases to the right analysts. All changes require approval before affecting production systems.

Goal: Demonstrate reliability

Track success rates, measure time savings, and collect analyst feedback. Expand automation based on proven performance.

Phase 3: Governed Autonomy (Run)

High-trust, routine tasks execute automatically within approved boundaries, human oversight shifts to exception handling and complex investigations. Analysts focus on novel threats while the teammate handles repetitive work.

Example: Automated Triage

Low-risk alerts auto-close with documentation. Known-threat patterns trigger immediate containment workflows. High-risk cases escalate to humans with enriched context.

Goal: Full integration

The teammate becomes a trusted partner that amplifies analyst capacity while maintaining governance and oversight.

Real-World Trust in Action

Autonomous Investigation with Transparency

- Gathers endpoint logs and network flow data

- Checks threat intelligence feeds for source/destination IPs

- Correlates with user behavior baselines

- Examines similar historical incidents

- Presents case summary: Suspected data exfiltration—high confidence based on transfer size, destination reputation, off-hours timing, and user pattern deviation

Natural Language Interface

- Security analyst asks: “Show me all critical vulnerabilities in production servers patched in the last 30 days.”

- Teammate shares patch dates, vulnerability details, asset owners, and compliance status.

- One-click actions are available to generate reports or create tickets for remaining gaps.

- Just natural language questions with transparent, evidence-backed answers.

Compliance Automation with Comprehensive Audit Trails

- Source system and timestamp

- Control mapping and rationale

- Gap identification with risk scoring

- Remediation recommendations with approval workflows

Attack Surface Management

- Discovery method and confidence score

- Business context (asset owner, criticality)

- Exposure analysis (open ports, misconfigurations)

- Threat intelligence correlation

- Recommended remediation with impact assessment

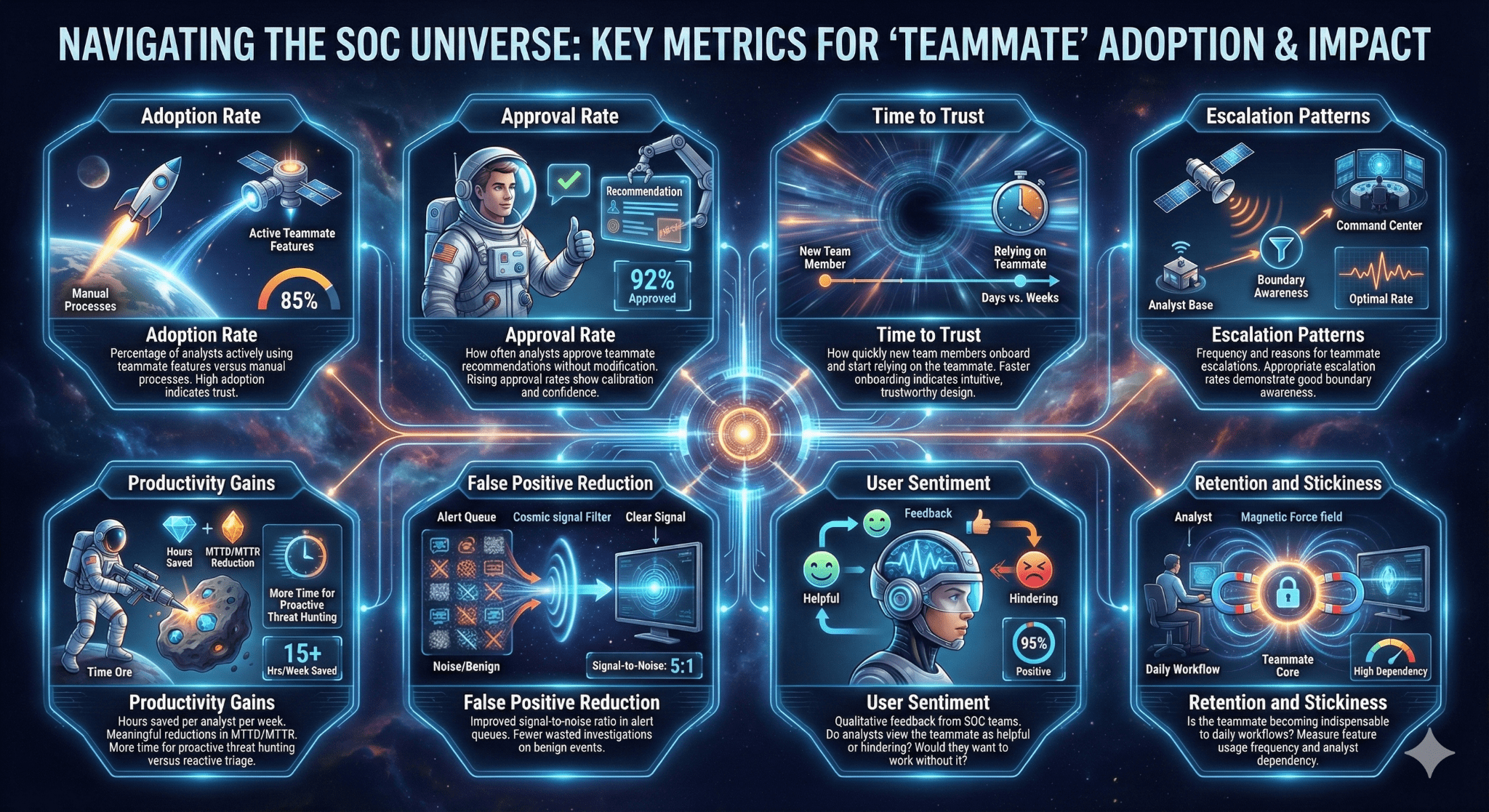

Measuring What Matters: Trust Metrics for Digital Security Teammates

- Adoption Rate

- Approval Rate

- Time to Trust

- Escalation Patterns

- Productivity Gains

- False Positive Reduction

- User Sentiment

- Retention and Stickiness

Overcoming Trust Barriers

“What if the Digital Security Teammate Makes a Mistake?”

Reversibility and approval gates prevent catastrophic errors. Start with low-risk actions. Expand gradually based on demonstrated performance. Every action includes rollback capability.

Mistakes are learning opportunities. When the teammate proposes actions that analysts modify, the platform captures that feedback for continuous improvement of workflows and playbooks.

“How Do I Know the AI isn’t Biased?”

Explainability reveals the data and logic behind every decision. Audit trails enable post-hoc analysis of decision patterns across different asset types, users, or threat categories.

Monitor for anomalies. If the teammate consistently misjudges specific scenarios, the transparency allows you to identify and correct the bias.

“Can I Really Trust a ‘black box’?”

Digital Security Teammates are designed not to be black boxes. Every decision includes accessible reasoning. Every action creates an audit trail. Transparency is architectural, not cosmetic.

Context-aware explanations show evidence, reference sources, and describe logic. You see the thinking, not just the conclusion.

“What About Compliance and Regulatory Requirements?”

Immutable audit logs meet the requirements of most regulatory frameworks. Evidence trails document who did what, when, and why—whether human or AI.

Explainability supports GDPR requirements for transparency in automated decision-making. Auditability assists with SOC 2 control documentation and evidence collection. Reversibility provides the governance that regulators expect.

The Future of Trust in Security AI

- The goal isn’t replacing analysts but elevating them from reactive ticket-solvers to proactive threat hunters

- AI-native security platforms will become standard as organizations seek to detect threats faster and respond more efficiently

- The trust gap narrows through explainability, auditability, reversibility, and governed autonomy

- The ultimate vision: AI provides speed, scale, and consistency, while humans provide judgment, creativity, and strategic thinking